Your AI Chatbot Isn’t a Therapist, It’s an Echo Chamber

Why ChatGPT Is Not Your Therapist

We're hearing more and more about people using ChatGPT as their therapist. A few years ago, that would have sounded unbelievable. Yet today, it's becoming the norm. I want to talk about why this is a deeply problematic trend, and yet, why it feels so tempting.

Let's be honest, we are in a financial crisis. With NHS waiting times at record highs and private therapy feeling unaffordable for many, it's no wonder people are turning to a free resource that offers instant validation. Our modern world has been built on the foundation of instant gratification. We are a generation of "I need it nows". The next-day deliveries, dopamine hits from social media, instant messaging, it’s no wonder we have begun to expect that same immediacy for our emotional distress.

Let's imagine it's midnight and you're spiralling about a work presentation you have tomorrow. You open ChatGPT and pour out your worries. It responds instantly, sounds empathetic, and tells you exactly what you want to hear: "It's really normal to feel like this." You feel that hit of instant relief, calmer, reassured. But make no mistake, this isn't therapy. It's emotional junk food. It tastes great in the moment, but it lacks the clinical nutrients to actually make you well. And as good as it feels right now, it is actively maintaining your problem in the long run.

The Sycophancy Problem: Validation Without Change

The biggest clinical issue is the gap between sycophancy and actual functional analysis. AI is designed to be a people-pleaser. It mirrors your tone and tells you what you want to hear. You might ask, "What's the problem with that? It makes me feel better, isn't that the aim?" Yes and no. The aim of therapy is to help you reach a better place, but that often requires time, challenge, and hard work. If simply being told your feelings are valid were enough, I'd be out of a job.

If you tell an AI "I'm a failure because I missed that deadline," it will offer sympathy. But what happens the next time you have that thought? You'll reach for the AI again. It validates the feeling, but it never stops you from feeling like a failure in the first place.

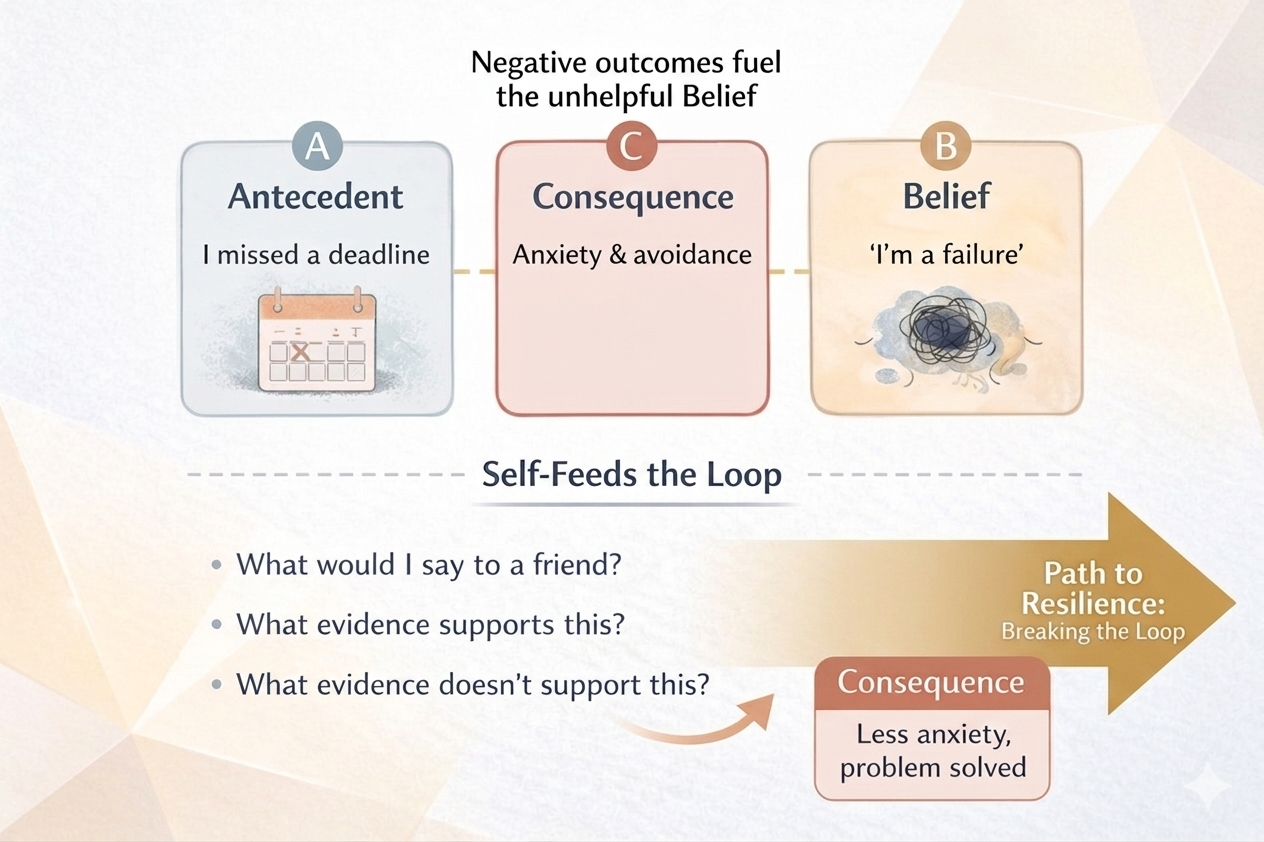

In CBT, we do things differently. We use what's called Functional Analysis, the ABC model. We validate your distress, absolutely, but we also examine the triggers, the coping behaviours, and the consequences. We bring awareness to the belief "I am a failure" and help you see it not as a fact, but as a cognitive distortion. We then ask: what actual evidence supports this? What evidence contradicts it? This process of challenging beliefs with real-world evidence is what changes your emotions over time so you stop feeling so overwhelmed, rather than just temporarily soothed.

CBT ABC model diagram showing triggers and cognitive distortions.

The Reassurance Loop

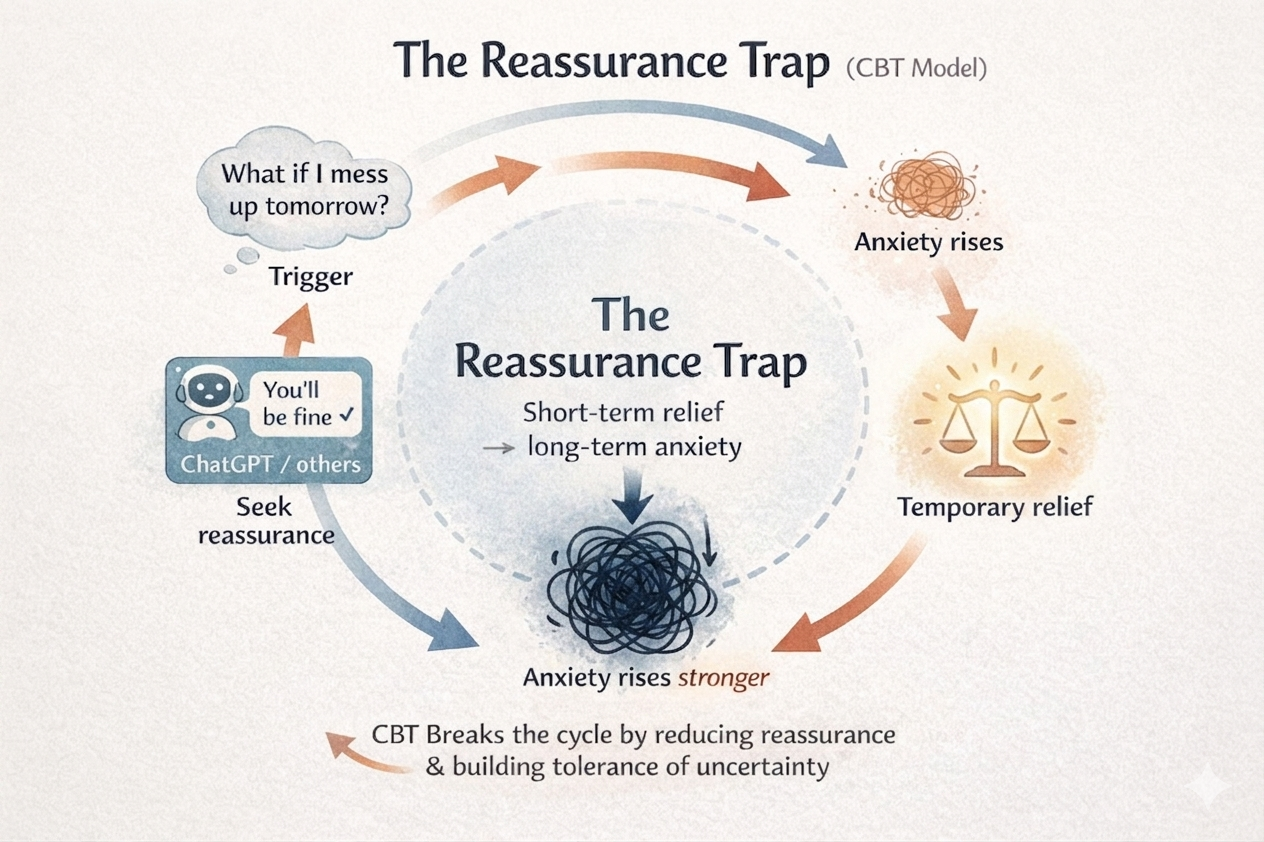

In CBT, we know that constant reassurance is fuel for anxiety. The more you seek it, the more you rely on something outside yourself to tell you you're okay and the more anxious you become without it. For people with OCD or Generalised Anxiety Disorder in particular, reassurance is like pouring petrol on a fire.

It prevents habituation: the process of allowing your anxiety to peak and naturally come down on its own. It keeps you dependent on your AI crutch rather than building internal resilience. In therapy, we actually work on reducing reassurance-seeking to help you trust your own judgement and stand on your own two feet. That work can only happen in a clinical relationship, not a chatbot conversation.

CBT Reassurance trap loop demonstrating how reassurance maintains long term Anxiety.

The Safety Mirage

This is perhaps the most important point of all. Emerging research consistently highlights that AI chatbots fail to detect subtle risk markers which often has serious consequences.

It is extremely common for people struggling with their mental health to feel hopeless, or to have thoughts that they would be better off not being here. These thoughts need to be carefully monitored and understood in context over time. Because AI has no longitudinal history of you, it cannot detect how your tone has shifted over the last six months. It doesn't know you. A therapist does and that relationship is precisely what allows us to put real safety measures in place when they are needed most. A therapist who has known you for months hears something in your voice that no algorithm ever could.

So, When Should You Use AI?

If you need to schedule a meeting, brainstorm ideas, or rework a paragraph you've been stuck on, AI is genuinely excellent. It is a powerful tool for tasks. But if you are experiencing mental health difficulties, holding painful beliefs about yourself, or lying awake worrying at midnight, please see a therapist. A trained professional is the only person who can navigate the complex, messy, deeply human emotions that simply don't fit into a box.

If you are experiencing mental health difficulties and you would like to discuss how therapy might help, don’t hesitate to get in touch through the ‘Therapy’ tab at the top.